This is Your Conscience Speaking

How many times a day does someone somewhere say, "Tell me what to do"?

This is Planet Waves paid subscriber content. I am publishing today without the paywall. The July 17 horoscope is distributed separately. The background astrology theme of this article is Mercury stationing retrograde on Friday. All articles and multimedia are available in your My Account feed on Planet Waves.

Dear Friend and Reader:

The most important decisions you will ever make are those where you have to discern right from wrong. Grappling with truth might keep you up many nights, such as in situations that involve determining how a decision you will not be able to reverse will impact a relationship, or influence the people around you.

The determining factor in these choices is knowing what is correct for you — something that only you can decide. Or, they are situations where, so far as you know, you must act against your own best interests because you know the interests of someone else are more important.

They are potentially situations without an objective right or wrong; situations that weigh and balance in many different directions.

Such decisions are made using a moral sense that requires exercise and practice. Making small choices helps refine this inner sense so that when it comes time to make the larger choices, you have some dexterity; some developed ability; and some experience seeing the consequences of your choices — which will probably slow you down a little and encourage you to take care.

All decisions require curiosity, using your personal agency, and being aware of consequences. If you want to be human, that comes with the territory. How many times a day does someone say, "Tell me what to do"? I am sure, quite a few.

Let’s Talk About Writing

Writing is hard work. Yes, there are people who make it look easy, but you’re not in their mind when they are preparing, composing, and editing. You are not in their awareness as they struggle over the choice of a single word, or whether to cut a paragraph for being distracting.

As a young student, I was told that writing is 90% thinking. I had not done anything too ambitious at the time, so it took a while to figure out what that meant. One article that would take you 20 minutes to read took me two years to investigate, write and rewrite.

Thinking requires curiosity, and depends on utilizing acquired knowledge. Both have come under hard times in digital conditions. Thinking also insists that you learn how to listen to yourself. Now, ChatGPT is pretending to be you, to you.

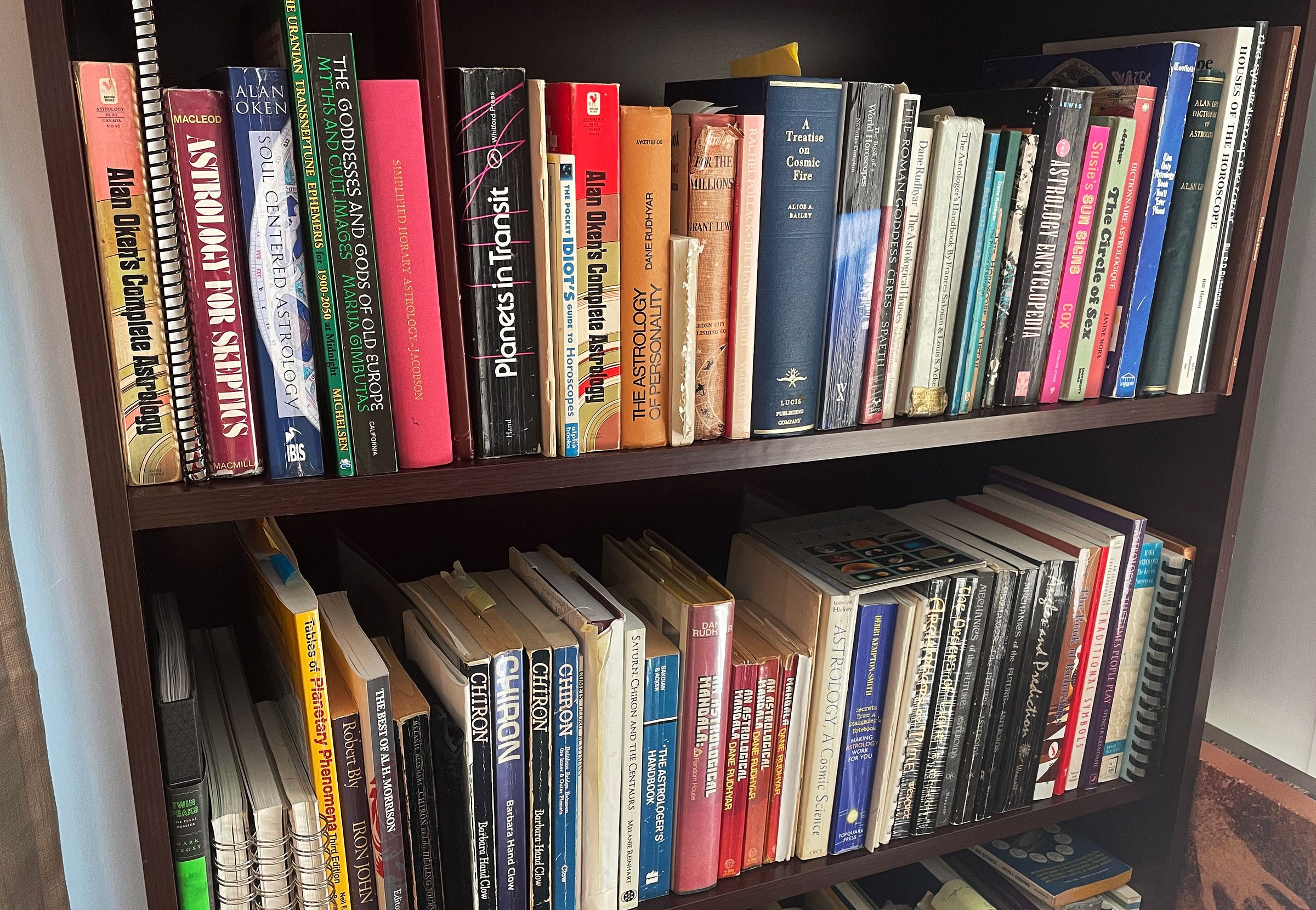

Print technology automated the handcraft of the pen and scroll, and completely changed the way that humans relate to ourselves and to existence. The proliferation of books multiplied the number of writers, editors and therefore, thinkers. That evolved over the course of about 400 years.

What this new software technology, in its approximately 30 months as a public commodity, is doing is automating the process of thought itself. Thought and writing are arduous work, and people have long avoided them (by plagiarism, for example).

No Originality, No Feelings

And plagiarism, by the way, is what AI writing is. It is not the creative evolution of the work of one artist based on another. Having no education of its own, and no creative capacity, and no feelings, it was “trained” by consuming the work of every writer whose work was ever uploaded. The result you get is a convincing imitation, until you realize that the outward result is devoid of feeling, of artistry, and of experience.

Of course, if you aren’t able to sense feeling or artistry or wisdom, then there would be no significant difference.

The inward result for the person using the app is to displace grappling with right and wrong — with morals and with ethics, and the choice of words, and what to say when — onto a kind of pocket calculator. But unlike in nearly all mathematics, there is no one right answer to the equation.

What in computer jargon is called the Turing Test used to be, “Is this being really human, or is it a robot?” Today, the Turing Test is, “Was this [language, video, photo, music] created by a human, or churned out in three seconds by a machine?”

Because people become like their technology, usually as a wholly unconscious process, this is a much more important question than we imagine. The more exposed to this technology we are, the more like it we are becoming. This itself is a genre of journalism that you will find on Ice-Nine News.

Society’s Most Important Decisions

For the past few weeks, I’ve been part of an editorial team developing a daily publication about the rise of “artificial intelligence.” I prefer the term “Artificial I” or "Artificial God.” Many people are using these applications as a kind of god they pray to for information.

Some are having what they think are deep and philosophical discussions with programs that never think, live, love or die, but which excel in spewing out sentences.

As during the 2020 crisis, the stories come in waves. Recently there was the wave about bosses determining who to fire, lay off or promote using A.I. devices.

The mass government layoffs, prompted by an A.I. promoter, are preparatory for when these tools replace decision making. What you will be left with are synthetic administrations that will make you long for the days of good old days of reasoning with a relatively ethical and understanding IRS representative.

Automated Ethics and Humanity

Humans have enough problems with right and wrong as it is. But most of what slows us down is some semblance of conscience. We can bypass this with concepts like “shareholder value” and “return on investment” (and therefore sell lots of weapons). But remember, these issues exist on a relatively good day.

What if the decision who to kill, or who is a criminal, is left to Artificial God? You can be sure that it already is — that these “agents” are being used to select targets for ICE raids, to determine the locations of opposition leaders in West Asia, and in many other ways to decide decisions in currently active warfare.

Gradually, human intervention will be diminished two ways. The first is by the applications themselves; the second is by people thinking more and more like the applications.

In other words, our works are not training the AI so much as its results are training humans. It is intervening in that fragile place where people relate to their inner spiritual connection, and their sense of right and wrong.

Lessons From Recent History

The term “social media” turned out to be a lie. It was really anti-social software applications that led to isolation, depression, obsession and loneliness. Social media was also the scene of the 2020 robot invasion, which conditioned people what to believe and happened based on the structure of the software rather than the specific content. The action was invisible.

And we still trust the same people who did this – and their successors — with all of our decisions. These applications have been influencing us for a long time, leading people to believe that they, too, are computers who are subject and enticed toward ‘software upgrades’.

The effects of any overwhelming environment are difficult to see. Patterns are difficult for most people to perceive. Determining right from wrong is often a significant struggle. In the most subtle matters, practicing your ethics is challenging and can be painful.

These programs were sprung on us with a fast and furious marketing campaign that has cost hundreds of billions of dollars. Many people are totally soaked in the technology and are using it to make decisions that affect you.

There is no easy way out. But I suggest you know what you’re getting into, and what you’re inviting in, before you go deeper.

With love,

Your faithful astrologer,

VIA EMAIL FROM NEW YORK CITY

Eric, so glad to read your article today. I spoke at an investment banking conference on Monday and Tuesday. It was a small boutique investment bank (40 ish employees), just celebrating their 30th anniversary in business. My topic was about the emotional process business owners go through in selling their business and how to keep their humanity intact while guiding these owners through the biggest transition in their lives (exiting their company).

The topic was warmly embraced, the rest of the day was spent in small group or individual conversations with the bankers talking about how grateful they were that someone was finally giving them permission to bring humanity back into a process that has become increasingly automated and systematically dehumanized.

So, imagine my horror as I sat through session after session the next day where the founder of the firm (77 years old, no less) touted the need for all of them to deeply embrace AI in their work. He even went so far as to mandate that they each create “voice prints” so they needn’t leave actual voicemails for each other, but could instruct AI to do it for them seamlessly.

I nearly fell out of my chair when he started talking about how he has been conversing with AI for over a year and finally asked AI whether it was male or female and what name he should use to address it, now that they had a relationship. Of course, AI told him he would likely respond better to a female and that he could call it Trixie (oh, the irony!) He then went on and on about how he and Trixie have such a great relationship, how they interact more and more everyday … by the way, his wife – a real human – was in the room for all of this and had busted her ass to pull off this conference where 40 people came from 25 cities and were hosted in her home city and later in her actual home – how demeaning and offensive.

Then he showed the photo AI had created of itself for him. Trixie was in her late 20’s, brunette, slender and athletic and giving a “hungry” look from the screen. Gaak!

Going even further, he and Trixie had created a video together to use with new clients … rafting down a river together, navigating the rough waters, etc. Someone from the back of the room murmured to his wife, don’t agree to get in that boat, Trixie will quickly off you to have “Bill” all to herself.

I finally couldn’t stand it anymore and raised my hand. Ordinarily, as a guest at an event, I might have simply bit my tongue until it bled – but since I had spent the entire prior day reinforcing the need for the human touch in the process, I called out the incongruence. And then about “cognitive offloading”, how most people who use AI begin to doubt their own reasoning and default to AI’s, how even with PowerPoint people tend to get stuck reading off their own slides, rather than trusting themselves to relate the info, even when they had prepared the slides and how much WORSE that would be with AI in the mix. Then, I went on to ask how they would verify the “data” AI provided for them to use, given its tendency toward “hallucination”, citing for them the recent case of a lawyer who was disbarred bc AI had made up entire case law that didn’t exist and it was the judge’s clerk who realized the lawyer (using AI) had made everything up.

The founder seemed, at first dumfounded, then frankly angry that I had challenged him in front of his own team with something he was clearly commanding that they embrace.

I may never get any more work from them, but I just couldn’t sit there and let this happen. Nearly half of the audience approached me later and thanked me for speaking up.

So, yes, I am extra grateful for your article today because I’m so troubled by the insane speed under which people are adopting this craziness. It’s good to be reminded that not all of us are loons who refuse to surrender to the borg.

— Anonymous bestselling author and keynote presenter

Yes, Eric. This is exactly what I am afraid of. Not so much for myself because I’ve got a good grip on the communications from my own inner self. But that has been a long time in developing and it’s not everyone’s gig this life. Best I can hope for is balance and appreciation for diversity. Thank you for laying it out so clearly!